Imagine being in a high-stakes business meeting where your AI-driven recommendation system suggests a game-changing investment. The numbers look promising, the predictions are solid, but when the CEO asks, “Why did the AI make this decision?” all you have is an awkward silence.

This is the reality of black-box AI, models that work their magic behind the scenes but leave us clueless about how they arrive at their conclusions. In an era where AI influences billion-dollar decisions, trusting an AI model without understanding its reasoning is like driving a car with a blindfold on. Exciting, but extremely risky.

Enter Explainable AI (XAI), the hero of our story. XAI is not just a technical necessity; it’s a bridge that connects AI models and business understanding. It ensures that decision-makers, developers, and end-users alike can grasp how an AI model works, making AI-driven insights more actionable, trustworthy, and compliant with regulations.

In this post, we’ll explore how mastering Explainable AI (XAI) can help bridge the gap between AI models and business understanding. We’ll break down complex concepts, explore real-world use cases, and show why XAI is essential for businesses, startups, and AI developers.

Why AI Needs to Be Explainable

The more advanced AI models become, the harder they are to interpret. Traditional machine learning algorithms, like decision trees and linear regression, are relatively transparent—you can trace their decision-making step by step. However, modern AI systems, particularly deep learning models and neural networks, function more like black boxes. These models process vast amounts of data and learn complex patterns, but understanding why they make specific decisions can be a challenge, even for AI experts.

This lack of transparency raises critical concerns across industries, affecting trust, compliance, and innovation. Here’s why explainability—often referred to as Explainable AI (XAI)—is not just a nice-to-have feature but an absolute necessity.

1. Trust and Transparency

Would you trust a doctor who prescribes medication without explaining why? The same logic applies to AI. Organizations rely on AI to automate tasks, predict outcomes, and optimize decision-making, but if they can’t understand how these decisions are made, trust erodes quickly.

This issue is particularly pressing in high-stakes sectors like healthcare, finance, and law, where AI-driven decisions can impact people’s well-being, financial stability, and legal outcomes. If a hospital uses an AI model to determine treatment plans, doctors and patients must know why certain recommendations are made. If a bank denies a customer a loan, the applicant deserves an explanation beyond “the algorithm said so.”

Without transparency, businesses risk losing customers’ confidence, facing public backlash, or even making flawed decisions that could lead to financial or legal consequences.

2. Compliance and Ethics

Explainability isn’t just about trust—it’s also about accountability. Many governments and regulatory bodies now require AI-driven decisions to be interpretable and auditable.

For example, the General Data Protection Regulation (GDPR) in Europe gives individuals the right to an explanation when an automated system makes significant decisions about them. Similarly, the EU AI Act classifies certain AI applications as “high-risk” and mandates transparency to prevent discrimination and bias.

A real-world example of why this matters: Apple’s credit card algorithm came under fire after reports suggested it gave lower credit limits to women than men, even when they had similar financial backgrounds. The issue sparked a public outcry and regulatory scrutiny, but without clear insights into the model’s decision-making process, it was difficult to determine whether bias was at play—or how to fix it.

Lack of explainability in AI makes it harder to detect and correct biases, which can lead to discriminatory outcomes, reputational damage, and even legal penalties.

3. Debugging and Improvement

AI models aren’t perfect—they make mistakes, and when they do, it’s crucial to understand why. However, debugging an opaque AI system can feel like searching for a needle in a haystack—except the haystack is on fire.

Explainable AI (XAI) provides insights into which features influence decisions, how confident the model is, and where potential biases exist. This helps developers pinpoint errors, optimize performance, and make adjustments that improve fairness and accuracy.

For example, if an AI hiring tool is rejecting qualified candidates from a specific demographic, explainability tools can help uncover whether certain irrelevant features (such as a candidate’s ZIP code) are influencing the outcome. By identifying and correcting these issues, companies can build AI models that are not only more effective but also more ethical.

Techniques for Explainable AI (XAI)

Different AI models require different XAI techniques to ensure transparency and accountability. While some models, like decision trees, are naturally interpretable, more complex models—such as deep neural networks—require additional methods to explain their decisions. Let’s explore some of the most common and effective techniques used to improve AI explainability.

1. Feature Importance

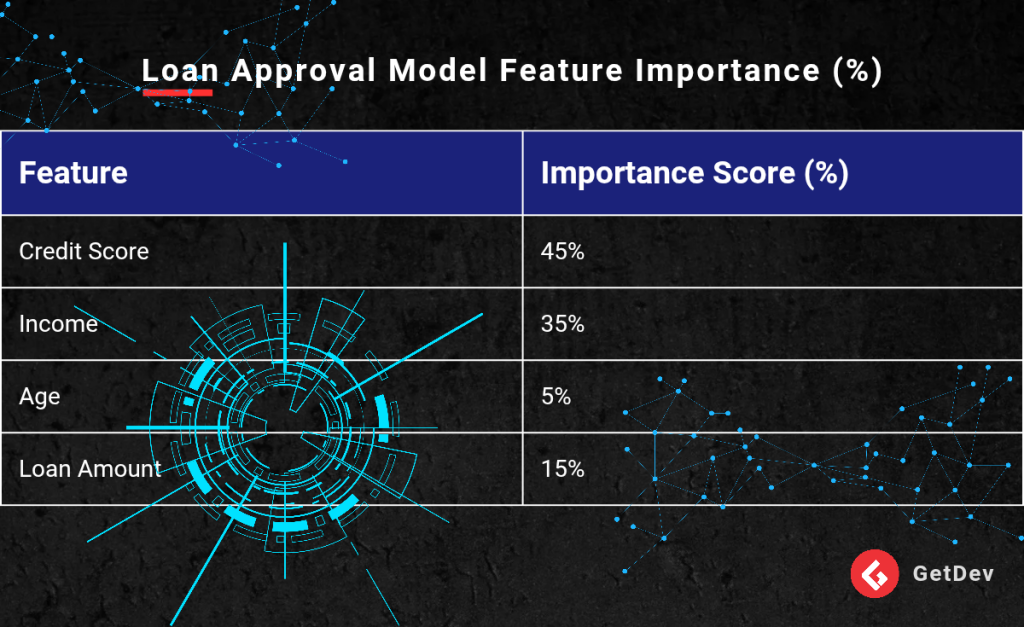

Feature importance analysis helps identify which factors (or “features”) contribute the most to an AI model’s predictions. By assigning importance scores to each feature, this method provides insight into how the model makes its decisions.

For instance, in a loan approval model, feature importance analysis might reveal that credit score and income are the two strongest predictors, while age has minimal influence. This knowledge helps businesses and stakeholders understand the reasoning behind AI-driven decisions and ensures that models are using relevant factors.

Example: Loan Approval Model Feature Importance

From this table, it’s evident that credit score is the most influential factor in determining loan approval, while age plays a minimal role. Understanding this distribution helps financial institutions validate whether their models align with business logic and fairness principles.

2. SHAP (SHapley Additive Explanations)

SHAP is a powerful explainability technique that assigns a score to each input feature, quantifying how much it positively or negatively contributes to the model’s final prediction. This method is based on cooperative game theory, ensuring fair and consistent attributions for each feature.

Example: Customer Churn Prediction

Imagine an AI model predicts that a customer will churn (stop using a service). SHAP analysis might reveal that the top contributing factors were:

- High support ticket volume (+0.3 influence on churn probability)

- Low engagement with the platform (+0.25 influence)

By understanding these contributions, businesses can proactively take action—such as improving customer support or increasing engagement—to reduce churn rates.

SHAP is particularly useful in domains like healthcare, finance, and marketing, where understanding individual predictions can drive better decision-making.

3. LIME (Local Interpretable Model-Agnostic Explanations)

LIME simplifies complex AI models by creating local approximations, which are easier to interpret. Instead of trying to explain the entire model at once, LIME focuses on small, understandable portions of the data, offering intuitive explanations.

Example: Loan Application Denial

Imagine an AI system denies a loan application. The underlying model may be a complex neural network with thousands of parameters, making it difficult to understand why the decision was made.

LIME approximates the model’s behavior using a simpler method, such as a decision tree, to provide a plain-language explanation:

“Your loan was denied primarily because your credit utilization was above 80%, and your annual income was below the required threshold.”

This level of transparency helps businesses communicate AI decisions effectively to customers and stakeholders, ensuring fairness and trust.

4. Counterfactual Explanations

Counterfactual explanations answer “What if?” questions by presenting alternative scenarios that could have changed the model’s decision. This method is particularly useful in helping users understand what they need to change to receive a different outcome.

Example: Loan Approval Scenario

“If your credit score was 20 points higher, you would have been approved for the loan.”

By showing clear actionable insights, counterfactual explanations empower users to take corrective actions—whether it’s improving credit history, increasing income, or reducing debt—to achieve a favorable outcome.

This technique is widely used in finance, healthcare, and hiring processes, where individuals seek clear guidance on how to improve their chances of success.

The Business Case for Explainable AI

Adopting Explainable AI (XAI) isn’t just about compliance and ethics, it also offers significant business advantages. Companies that prioritize transparency in AI decision-making can gain customer trust, reduce risks, and drive adoption of AI-powered solutions. Let’s explore the key benefits in more detail.

1. Gaining Competitive Advantage

In today’s fast-evolving digital landscape, businesses that integrate AI into their operations must differentiate themselves to stay ahead of the competition. One of the most effective ways to do this is by implementing Explainable AI (XAI).

Startups and established enterprises that provide transparent, trustworthy AI solutions can build stronger relationships with their customers, partners, and investors. People are naturally skeptical of AI-driven decisions, especially when they cannot understand the reasoning behind them.

A company that proactively explains its AI decisions will have an edge over competitors that use black-box models. This transparency can lead to higher customer retention, increased investor confidence, and better regulatory compliance, all of which contribute to long-term business success.

Example: AI-Powered Financial Services

A fintech company that offers an AI-driven credit scoring system can stand out by providing clear justifications for loan approvals or rejections. Instead of simply saying “You were denied a loan”, the company could explain:

“Your credit utilization is too high, but if you reduce it by 10%, your loan approval chances will improve.”

Such transparency not only builds trust but also encourages customers to engage with and improve their financial standing.

2. Reducing Legal and Financial Risks

AI failures can have serious consequences, including lawsuits, regulatory fines, and reputational damage. This is particularly true in industries like finance, healthcare, and hiring, where AI decisions have real-world impacts on people’s lives.

Explainable AI minimizes these risks by ensuring that AI-driven decisions are:

- Justifiable – The company can provide a logical explanation for each outcome.

- Non-discriminatory – Biases in AI models can be identified and corrected before deployment.

- Compliant with regulations – Laws such as GDPR, the AI Act, and the Fair Credit Reporting Act demand transparency in AI decision-making.

Example: AI in Hiring Practices

An AI-powered recruitment tool that screens job applicants could unintentionally discriminate against certain groups due to biased training data. If the model is not explainable, the company could face legal action for unfair hiring practices.

However, with XAI techniques like SHAP and LIME, the company can audit its model, identify biases, and adjust decision-making processes to ensure fairness and legal compliance.

By prioritizing explainability, businesses can avoid costly lawsuits, fines, and reputational damage, ultimately saving money and safeguarding their brand image.

3. Driving User Adoption

Users are more likely to adopt AI-driven products and services when they understand how these technologies work. A lack of transparency can create skepticism and resistance, even if the AI model is highly effective.

Whether it’s an AI-powered recruitment tool, recommendation engine, or medical diagnosis system, explainability enhances user confidence, leading to higher adoption rates.

Example: AI in E-Commerce

Imagine an online retailer using an AI-driven product recommendation system. If customers don’t understand why they’re being shown certain products, they may be less likely to trust or engage with the recommendations.

However, if the system explains:

“You are seeing this product because you previously purchased similar items and rated them highly.”

customers are more likely to interact with the recommendations, leading to higher engagement, increased sales, and improved user satisfaction.

Case Study: Explainable AI in Healthcare

To illustrate the real-world impact of XAI, let’s examine a case where explainability played a crucial role in bridging the gap between AI models and business success.

Company: IBM Watson Health

Challenge:

Doctors were hesitant to trust AI-driven diagnoses because they couldn’t understand the reasoning behind the model’s recommendations. This skepticism led to low adoption rates of AI-powered diagnostic tools.

Solution:

IBM implemented SHAP and LIME, two explainability techniques that allowed doctors to see which medical factors influenced each AI diagnosis. By providing clear, interpretable explanations, IBM made it easier for doctors to trust the system.

Result:

- Increased adoption of AI-powered diagnostic tools.

- Improved patient outcomes, as doctors could combine AI insights with their medical expertise.

- Greater regulatory approval, as the system met transparency and accountability requirements.

This case study highlights how explainability is not just a technical requirement but a business enabler that drives trust, adoption, and success in AI applications.

The Future of Explainable AI (XAI)

As AI becomes more integrated into business and society, explainability will shift from an optional feature to a necessity. Three key trends will shape its future:

1. Stricter AI Regulations

Governments worldwide are introducing laws requiring AI transparency, such as the EU AI Act and stricter compliance rules in finance and healthcare. Businesses that prioritize explainability today will avoid legal risks and gain a competitive edge.

2. AI-Powered Explanations

Ironically, AI is now being used to explain other AI models. Emerging self-explaining AI will provide real-time, human-friendly justifications for decisions, reducing the need for manual interpretation. Expect AI-driven transparency to become more advanced and accessible.

3. Industry-Wide Adoption

Explainable AI will become a standard requirement across industries, from finance and healthcare to e-commerce and HR. Companies that fail to implement XAI risk losing user trust, facing regulatory fines, and falling behind competitors.

Conclusion: Making AI Work for Everyone

AI is actively shaping industries and transforming businesses, but without understanding its decision-making process, we risk operating in the dark and relying on systems that may be biased or untrustworthy. Explainable AI (XAI) is not optional—it’s essential. It bridges the gap between AI models and business decision-making, ensuring that AI-driven insights are transparent, actionable, and reliable. Without explainability, businesses may struggle to trust AI recommendations, leading to poor decisions and regulatory risks.

For developers, startups, and entrepreneurs, investing in XAI means building trustworthy AI solutions. For businesses, it enables smarter, more informed decisions. And for end-users, it fosters confidence in AI-powered products. Would you like to implement Explainable AI in your business or startup? Let’s have your opinion!